If your AI assistant can approve refunds, access internal knowledge bases and trigger cloud workflows – who is testing whether it can be manipulated?

That question is not theoretical anymore.

Across India, firms are integrating artificial intelligence into their internal engines, fraud detection systems, HR copilots, customer support automation and executive dashboards. AI is no longer experimental. It is operational.

For CISOs and CXOs, this shifts the security conversation from infrastructure protection to behavioural validation.

Traditional VAPT may confirm that your servers and APIs are secure. But it does not answer whether your AI system can be forced to leak sensitive data, execute over-privileged tools or alter business decisions through adversarial inputs.

That is why identifying reliable AI security assessment companies in India has become a governance priority in 2026.

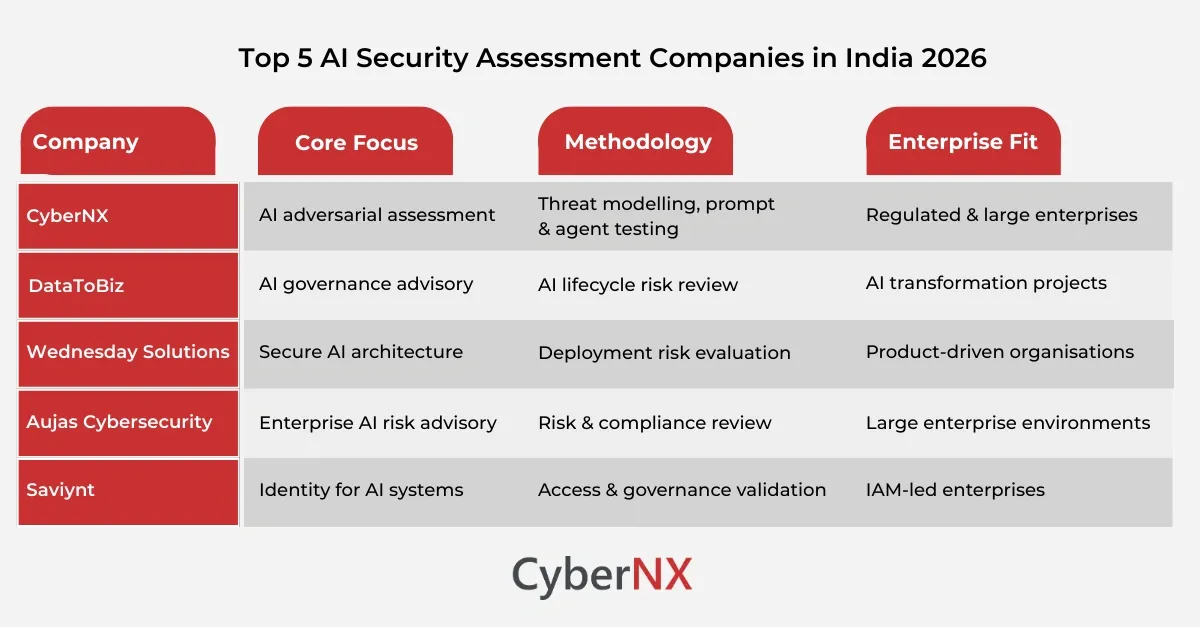

This article highlights five firms with relevant AI security assessment capabilities, evaluated through a practical enterprise lens instead of just marketing claims.

What makes a reliable AI security assessment company?

A credible AI security assessment firm should demonstrate:

- Architecture and data-flow review for AI systems

- AI-specific threat modelling

- Adversarial testing of LLMs and agents

- RAG and knowledge base validation

- Tool permission boundary testing

- Governance and monitoring control validation

- Business-impact-driven risk ranking

Top 5 AI security assessment companies in India

Below are the top 5 firms in India that provide reliable AI security assessment services:

1. CyberNX

CyberNX is an India-based cybersecurity firm with a dedicated focus on AI security assessment and adversarial validation.

The firm provides structured, end-to-end AI security reviews covering:

- Architecture and data-flow review across model, application, agent and governance layers

- AI-specific threat modelling aligned to adversarial attack patterns

- Controls validation, including authentication, authorization, sandboxing and monitoring

- Risk ranking with a structured hardening roadmap

Unlike traditional pentesting firms, CyberNX evaluates behavioural risk, workflow compromise exposure and tool permission boundaries in AI-integrated enterprise systems.

The company’s methodology includes threat modelling, adversarial testing across LLM and RAG layers, and remediation validation cycles. Deliverables are designed for both technical teams and executive stakeholders, making it suitable for regulated industries and board-level reporting.

For organisations deploying AI copilots, agent-driven workflows, or RAG over sensitive data, CyberNX positions AI security assessment as a structured governance discipline rather than a one-time technical test.

2. DataToBiz

DataToBiz is mostly known as an AI and analytics consulting firm with experience in enterprise AI implementations. The company also provides AI security and governance advisory as part of broader AI strategy engagements.

Their capabilities typically focus on:

- AI governance frameworks

- Data security controls for AI systems

- Risk management within AI lifecycle implementations

While not exclusively a cybersecurity firm, DataToBiz may support organisations looking for AI risk evaluation in the context of broader transformation initiatives.

3. Wednesday Solutions

Wednesday Solutions is a technology consulting firm based in India with experience in AI system development and enterprise software engineering. The company has been associated with AI security management services in consulting contexts.

Its relevance in AI security assessment typically includes:

- Secure architecture advisory for AI applications

- Risk evaluation during AI product development

- Governance-oriented AI deployment practices

Their positioning is more advisory-led, mostly for organisations integrating AI into digital products.

4. Aujas Cybersecurity

Aujas Cybersecurity is an established enterprise cybersecurity consulting firm in India. While traditionally focused on application and infrastructure security, the firm has expanded into advanced risk domains including AI-related advisory.

In the context of AI security assessment, Aujas may contribute through:

- Enterprise risk assessments

- Advanced security engineering reviews

- Governance and regulatory compliance alignment

Their strength lies in large-scale enterprise environments where AI risk must be evaluated within broader cybersecurity and compliance frameworks.

5. Saviynt

Saviynt is an identity security platform provider with a presence in India. Its AI-driven identity and access management capabilities intersect with AI system security.

Its relevance to AI security includes:

- Identity governance across AI-integrated environments

- Access control management

- Risk visibility for enterprise systems connected to AI workflows

Saviynt’s value in this context is related to identity and access control governance for organisations deploying AI tools within enterprise ecosystems.

Why AI security assessment is different from traditional VAPT

Security leaders evaluating AI security assessment companies in India must understand a fundamental distinction.

Traditional VAPT focuses on:

- Infrastructure vulnerabilities

- Code-level injection flaws

- Authentication misconfigurations

- Network segmentation gaps

AI security assessment focuses on:

- Prompt injection resilience

- Behavioural manipulation

- RAG poisoning

- Agent tool abuse

- Workflow compromise

- Governance and auditability

An AI chatbot infused with financial systems can be manipulated without breaching infrastructure. A knowledge base can be poisoned to alter decision outputs without even triggering alerts.

This is why AI security assessment requires adversarial testing combined with governance validation.

How CISOs should evaluate AI security assessment providers

When selecting among AI security assessment companies in India, consider the following evaluation criteria:

1. Depth of AI-specific threat modelling

Does the provider understand prompt injection, indirect injection, and tool abuse scenarios; or are they just rebranding traditional pentesting?

2. Multi-layer coverage

Can the provider test:

- Model layer

- Application layer

- RAG / knowledge layer

- Agent and tool layer

- Monitoring and governance layer

3. Business-impact-driven reporting

Do findings map to workflow compromise and regulatory exposure, or are they limited to technical severity scores?

4. Remediation validation

Does the firm conduct retesting and provide a structured hardening roadmap?

5. Executive communication

Are deliverables structured for board reporting and compliance alignment?

Security leaders should prioritise structured AI security assessment methodology over brand recognition.

The growing demand for AI security assessment in India

India’s enterprise ecosystem is rapidly adopting:

- AI customer support agents

- AI underwriting and fraud systems

- RAG-powered internal copilots

- Autonomous workflow assistants

At the same time, regulators are increasing scrutiny around data protection, governance and algorithmic accountability.

AI systems connected to CRM platforms, cloud infrastructure, financial systems and HR systems create new risk surfaces that traditional pentesting alone does not address.

As a result, the demand for specialised AI security assessment companies in India is expected to grow significantly through 2026 and beyond.

Conclusion

The conversation around AI security has moved beyond theory. For CISOs and CXOs, the real question is not whether AI systems are innovative, but whether they are resilient against adversarial manipulation.

Among AI security assessment companies in India, we have a different, structured approach to architecture review, AI-specific threat modelling and controls validation.

For organisations deploying LLMs, AI agents, or RAG-powered knowledge systems, structured AI security assessment is no longer optional.

If your firm is integrating AI into production workflows, now is the time to validate its strength under adversarial pressure.

Connect with us to initiate a structured AI security assessment and gain clarity on your organisation’s AI risk exposure before it becomes a business incident.

AI Security Assessment Companies in India FAQs

How is an AI security assessment different from traditional pentesting?

AI security assessments evaluate behaviour manipulation risks like prompt injection, RAG poisoning and agent tool abuse, while traditional pentesting focuses mainly on infrastructure and application flaws.

How often should enterprises conduct AI security assessments?

AI systems should be assessed before deployment, after major architectural changes and periodically, as integrations evolve or regulatory requirements shift.

Are AI red teaming and AI security assessments the same?

AI red teaming focuses on adversarial attack simulation. AI security assessments are broader and include architecture review, control validation, governance evaluation and remediation planning.

Can traditional VAPT vendors secure AI systems?

Traditional VAPT may identify infrastructure issues, but specialised AI security expertise is required to test model behaviour, workflow compromise risk and tool permission boundaries.

What industries in India need AI security assessment the most?

BFSI, healthcare, e-commerce, technology and government sectors deploying AI in decision-critical workflows should have structured AI security evaluation.