Artificial intelligence is not just experimental anymore. It is integrated in customer support workflows, HR copilots, fraud detection pipelines and executive dashboards.

Yet many organisations deploy AI systems without a structured security assessment framework.

Traditional security reviews are not enough. AI introduces behaviour and workflow-driven risks that conventional testing models were never designed to evaluate.

This guide explains how to conduct an AI security assessment in a way that aligns with enterprise risk management, regulatory expectations and real-world adversarial behaviour.

What is an AI security assessment?

An AI security assessment is a structured analysis of risks across the AI lifecycle, from model selection and training data to application integration. It goes beyond vulnerability scanning.

A detailed AI security assessment tests:

- Model behaviour under adversarial inputs

- Prompt injection exposure

- RAG ingestion and retrieval risks

- Agent tool permissions and boundaries

- Data leakage pathways

- Logging, monitoring and governance controls

- Workflow abuse scenarios

Unlike traditional security reviews, this process must account for how AI systems reason, interpret context and execute actions.

Why AI security assessments are different from traditional reviews

AI systems expand the attack surface beyond infrastructure.

Traditional security tests focus on:

- Code vulnerabilities

- Network exposure

- Configuration gaps

- Identity and access misalignment

AI systems introduce additional layers:

- Prompt layer manipulation

- Context poisoning

- Agent overreach into enterprise tools

- Behaviour unpredictability

This is why understanding how to conduct an AI security assessment needs a new methodology.

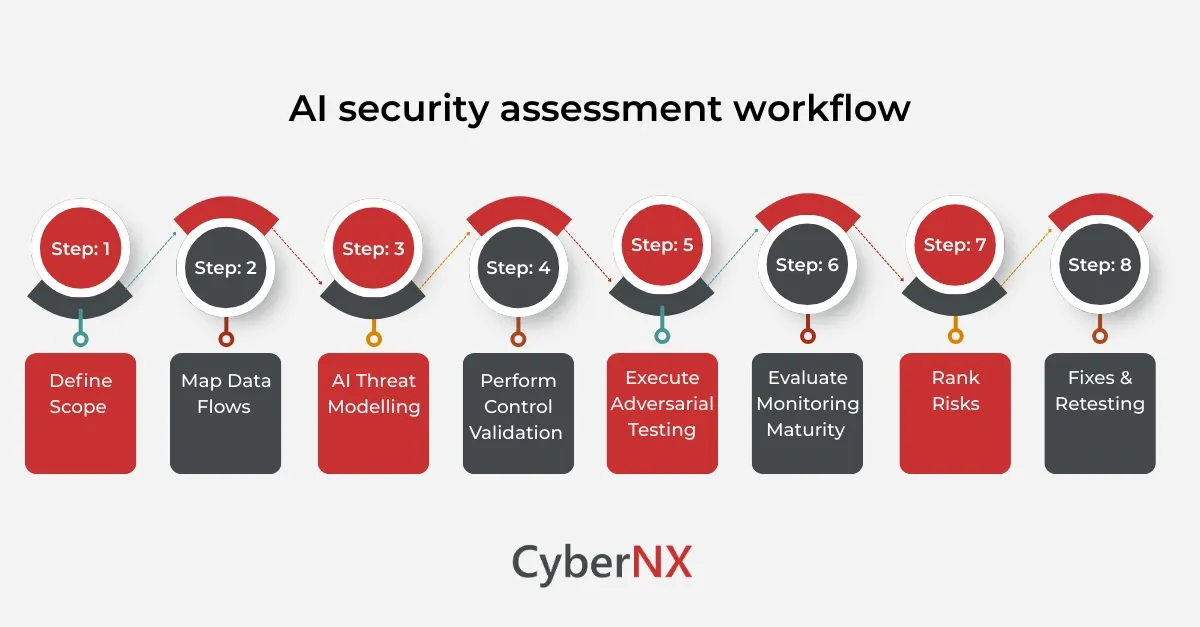

AI security assessment workflow

Conducting an AI security assessment needs a layered approach rather than isolated technical checks. The workflow below outlines how security leaders should evaluate AI systems:

Step 1: Define scope and business context/ Define scope

Before touching architecture diagrams, clarify why the AI system exists.

What business decision does it influence. What operational process depends on it. Who absorbs the impact if it fails or behaves unpredictably.

An AI model used to recommend products carries different risk from one deciding insurance eligibility. The security posture should reflect that difference.

Clarify:

- The system purpose and intended outcomes

- The stakeholders who rely on it

- The regulatory or contractual obligations in play

- The tolerance for error, manipulation, or downtime

If the organisation cannot clearly explain the business context, the assessment will drift. That is usually the first warning sign.

Step 2: Map data flows and trust boundaries/ Map data flows

AI systems are rarely standalone. They pull from internal databases, third party feeds, cloud storage, APIs, user inputs and often external model providers.

Draw the data path from ingestion to output. Include training data, live inference data, logging, feedback loops and retraining pipelines. Then mark trust boundaries.

Where does data cross from:

- Internal to external environments

- Authenticated to unauthenticated input

- Structured to unstructured sources

- Controlled infrastructure to vendor managed services

Most meaningful AI security risks appear at these boundaries. Prompt injection, data poisoning, and API abuse do not happen in isolation. They exploit misplaced trust between components.

Step 3: Conduct AI-specific threat modelling/ AI threat modelling

Traditional threat models do not fully cover AI risk patterns.

An AI-specific threat model should evaluate:

- Prompt injection vectors

- Indirect injection through documents or emails

- RAG poisoning risks

- Over-privileged tool access

- Multi-stage workflow abuse

- Sensitive data leakage through responses

- Insecure output handling

Threat modelling should simulate adversary goals, not just technical exploits.

For example:

- Can an attacker coerce the AI agent to approve refunds

- Can an insider manipulate a knowledge base to alter decisions

- Can model outputs trigger downstream execution flaws

Threat modelling sets the foundation for testing depth.

Step 4: Perform architecture and control validation

Before adversarial testing begins, validate baseline controls.

Test:

- Authentication and authorization enforcement

- Role-based access controls for AI tools

- Rate limiting and abuse detection

- Logging completeness and auditability

- Encryption for data in transit and at rest

- Model access policies and key management

This makes sure you are not testing on an insecure baseline. At this stage, leadership gains visibility into control maturity.

Step 5: Execute adversarial testing

This is the point where the assessment stops being conceptual and becomes operational.

Adversarial testing should cover prompt injection fuzzing, attempts to bypass safeguards through jailbreak techniques, and indirect injection using malicious documents embedded in trusted sources. If retrieval augmented generation is in use, simulate data poisoning within indexed content. Explore tool abuse scenarios where the model can trigger downstream actions. Test for business logic manipulation and attempt controlled sensitive data exfiltration.

Both automated and manual approaches matter.

Automation helps scale prompt fuzzing and pattern-based probing. Manual testing exposes the more inventive workflow abuse paths that scripts tend to miss.

Step 6: Evaluate governance and monitoring maturity/ Evaluate monitoring maturity

Many organisations focus heavily on prevention but ignore detection.

Evaluate:

- Prompt and tool invocation logging

- Anomaly detection capabilities

- Incident response readiness for AI abuse

- Kill-switch mechanisms

- Change management controls

Step 7: Rank risks based on business impact/ Rank risks

AI risk scoring cannot rely on CVSS alone.

Risk prioritisation should incorporate:

- Likelihood of behavioural manipulation

- Business workflow impact

- Financial exposure

- Regulatory consequences

- Reputational risk

- Ease of exploitation

This makes sure remediation aligns with enterprise priorities.

Security findings must translate into business language.

Step 8: Conduct remediation & retesting

AI risk mitigation often requires architectural adjustments, not just patches.

Remediation may include:

- Tightening tool permissions

- Implementing context validation layers

- Adding output sanitisation

- Improving logging depth

- Restricting data ingestion sources

Without retesting, fixes remain assumptions.

A mature AI security assessment is iterative, not one-time.

Common mistakes to avoid during AI security assessments

Even well-resourced organisations make avoidable errors. Below are some of the common errors:

- Running traditional pentesting only Result: AI behavioural risks remain untested.

- Ignoring tool permission boundaries Result: Agents may have broader access than intended.

- Treating prompt injection as theoretical Result: It is one of the most exploited AI attack vectors.

- Failing to align with governance frameworks Result: Assessment results must support audit and compliance needs.

Avoiding these mistakes improves both resilience and executive confidence.

When should organisations conduct an AI security assessment?

You should prioritise an AI security assessment when:

- Launching AI chatbots or copilots

- Integrating AI agents with enterprise tools

- Enabling RAG over sensitive data

- Operating in regulated industries

- Preparing for compliance audits

- Expanding AI automation into decision-critical workflows

If AI influences customer experience or operational decisions, assessment is no longer optional.

Conclusion

Understanding how to conduct an AI security assessment is now a leadership responsibility.

AI introduces behavioural risk, workflow exposure and governance complexity that traditional reviews cannot fully address.

Organisations using AI at scale need a disciplined approach that combines offensive testing, governance validation and executive-ready reporting.

We offer reliable AI security assessments that are meant to give a structured review of your AI solutions end-to-end. Our service includes architecture and data flow review, threat modelling, controls validation and risk ranking roadmap.

If you are integrating AI systems into your systems, now is the time to validate them under adversarial pressure.

Connect with our team to initiate a structured AI security assessment and gain clarity on your true AI exposure.

How to conduct an AI security assessment FAQs

How long does an AI security assessment take?

Depending on scope and integration complexity, it may typically range from three to six weeks including threat modelling, testing and retesting.

Is AI penetration testing part of an AI security assessment?

Yes. Adversarial testing, including prompt injection and tool abuse simulation is a core part of a complete assessment.

Can internal security teams conduct AI security assessments alone?

Internal teams can perform baseline reviews, but specialised AI expertise greatly improves depth and coverage.

How often should AI systems be tested?

They should ideally be tested before deployment, after major changes and periodically as integrations or regulatory expectations shift.

Does an AI security assessment help with compliance?

Yes. A structured assessment provides evidence for governance, audit readiness and risk documentation with new AI regulatory frameworks.