Over the past few years, Artificial Intelligence (AI) has transformed the cybersecurity landscape. From spotting hidden vulnerabilities to automating threat hunting, AI promises unmatched defensive strength. Yet this very promise carries danger. AI has become a “double-edged sword” in cybersecurity, capable of empowering defenders at unprecedented scale while simultaneously enabling adversaries to act faster and more effectively than ever before. This tension defines the security landscape in 2026 – and it shapes how organisations must think about AI adoption.

The numbers underscore this reality:

- Security teams increasingly cite AI-driven attacks as their biggest challenge, with 62 % of managers and 53 % of C-suite cyber leaders confirming this trend in organisational threat reports.

- At the same time, AI is detecting critical vulnerabilities that traditional tools miss, such as over 500 new high-severity flaws found by advanced models in open-source codebases. These figures highlight the dichotomy confronting security strategy today.

In this blog, we explore AI’s benefits, risks, use cases, future trends, governance needs, and practical strategies for balancing innovation with responsibility. The goal for security leaders is not to fear AI, but to harness it safely and effectively.

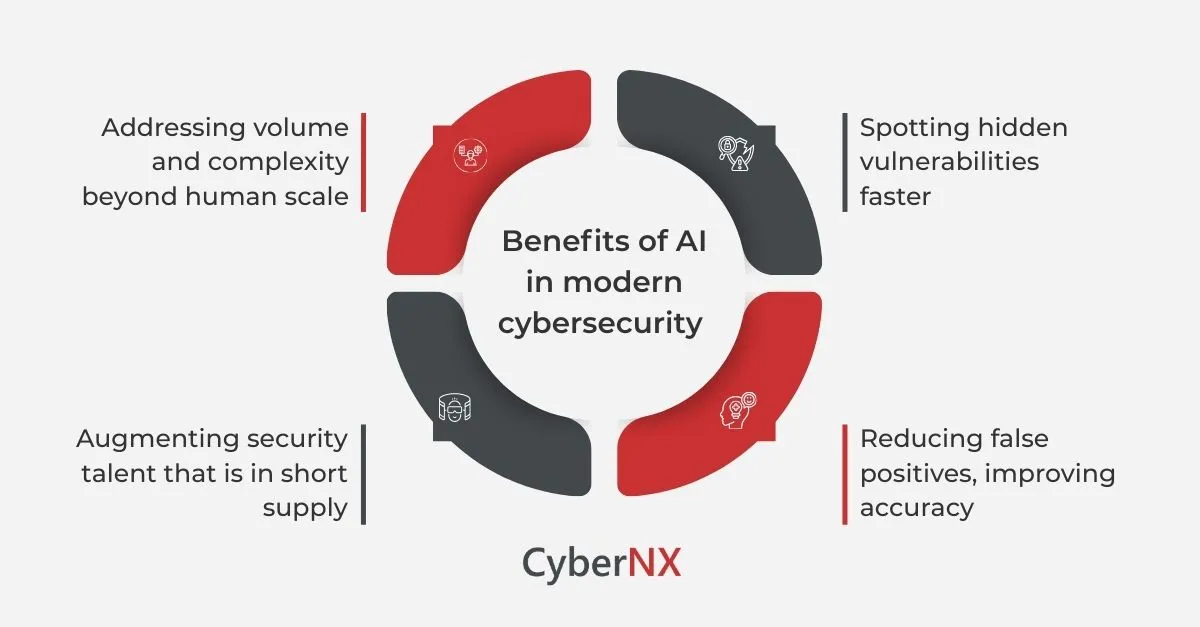

Why AI Is becoming central to modern cybersecurity

1. Addressing volume and complexity beyond human scale

Organisations today generate enormous amounts of digital activity – security logs, user behaviour data, cloud telemetry, and application code. Manual threat analysis struggles to keep pace with billions of events per day. AI can process this volume at machine speed, spotting patterns and anomalies humans might never see.

According to security benchmark surveys,

- over 90 % of frontline managers report increased attack frequency, with severity growing in tandem. AI-driven analytics, behavioural models, and anomaly detection engines help organisations prioritise signals over noise in this data deluge.

This is critical because time to detect and respond remains a key differentiator in breach impact mitigation. Data from industry reports shows that reducing dwell times by even a few hours can significantly limit data loss and financial damage.

2. Spotting hidden vulnerabilities faster

One of AI’s most striking defensive advantages lies in software vulnerability discovery. Traditional static tools often miss complex logic flaws or chained weaknesses across systems. Advanced AI models – especially those trained on large code corpora and reasoning tasks – can recognise and prioritise subtle patterns that otherwise remain hidden.

For example, an AI model recently identified more than 500 previously unknown high-severity vulnerabilities in open-source libraries that traditional scanning tools overlooked. This scale of discovery not only accelerates remediation but also helps reduce exposure windows – a persistent source of risk for organisations worldwide.

3. Reducing false positives, improving accuracy

Operational fatigue – spent chasing alerts that turn out to be harmless – remains a major drain on Security Operations Centre (SOC) effectiveness. AI improves accuracy by prioritising alerts based on risk context and historical impact. Machine learning models reduce false positives, enabling analysts to focus investigation efforts where they matter most.

In addition to speed, AI delivers contextual insight that brings deeper meaning to anomaly signals – a major operational advantage over traditional rule-based systems.

4. Augmenting security talent that is in short supply

The cybersecurity talent shortage is well documented. Industry estimates put the global shortfall in security roles in the millions. AI functions as a force multiplier – enabling fewer analysts to do far more. It handles repetitive tasks, suggests remediation steps, and supports investigative workflows that historically demanded specialist expertise.

This pairing of human experience with AI assistance lets organisations scale security outcomes without proportionally increasing headcount.

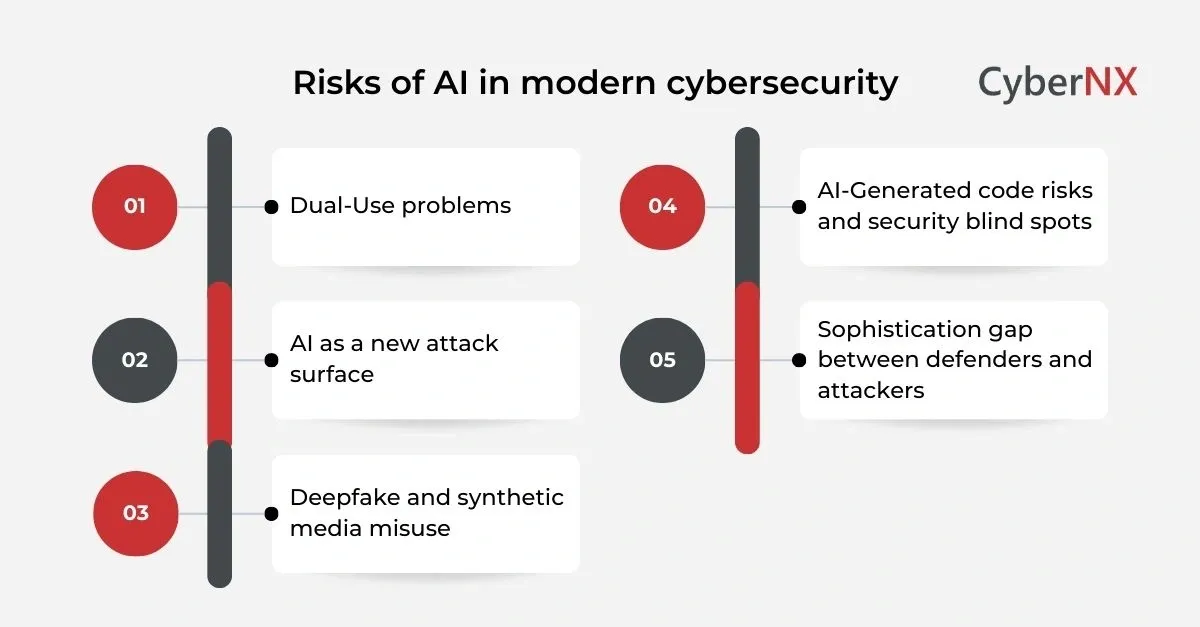

The risks AI introduces in cybersecurity

While AI’s defensive value is substantial, the technology also introduces new risks that cannot be overlooked.

Dual-Use problems: defensive advantage or offensive weapon?

By design, AI excels at analysing vast datasets and recognising patterns. These capabilities benefit defenders — but they also assist attackers.

Security professionals now warn that AI-driven attacks could scale dramatically, automating steps like reconnaissance, code scanning, and exploit development. A Microsoft security report highlights that advanced AI agents can potentially automate entire attack chains – from discovery to exploitation – at speeds unattainable by humans alone.

This dual-use nature complicates defensive strategy. What tools and methods should be openly deployed when adversaries may have access to similar capabilities?

AI as a new attack surface

Deploying AI models without robust governance can expose organisations to new kinds of vulnerabilities. Techniques like prompt injection – where an adversary subtly manipulates model inputs – can lead to unintended model behaviour or data leaks. National cybersecurity agencies now classify prompt injection as a critical threat, capable of undermining model integrity and decision outputs.

As AI moves deeper into security workflows – from ticketing systems to automated response platforms – weak model configuration or unmonitored outputs can unintentionally cause operational harm or amplify errors.

Deepfake and synthetic media misuse

AI-generated synthetic content – audio, images or video – is surfacing in cybercrime contexts with real impact. Deepfake scams have produced multi-million-dollar fraud cases, including large-scale impersonation and financial loss. The risk is not just financial: trust in digital identity and communication channels is eroding as AI cloning becomes more accessible.

This points to a broader trend: AI misuse is not limited to code or malware. It now threatens human perception, trust, and digital fairness at massive scale.

AI-Generated code risks and security blind spots

As organisations increasingly adopt AI coding assistants, new security challenges emerge. Research indicates that AI-generated code now constitutes a significant share of enterprise development, yet much of it contains flaws.

Some studies suggest that nearly 24 % of code in production may be AI-generated, and a concerning portion of security breaches involve such code.

Developers trusting AI outputs without thorough review creates an “illusion of correctness,” increasing the chance that code vulnerabilities slip into critical systems.

Sophistication gap between defenders and attackers

Surveys reveal a troubling perception gap: many security leaders admit that the technology behind cyberattacks may now be more sophisticated than their own defence capabilities. This underscores the urgency of ensuring defensive AI keeps pace with offensive AI.

High-Impact AI use cases in cybersecurity

To fully grasp AI’s role, it helps to explore specific practical applications – and how they’re shaping modern security operations.

AI-Driven vulnerability discovery and prioritisation

AI can scan codebases, dependencies, and configurations far more quickly and comprehensively than any human team. Models trained on vulnerability taxonomies, exploit patterns, and threat indicators can identify weaknesses that traditional static analysis often misses.

In addition, AI can prioritise vulnerabilities based on real-world exploit data, asset criticality, and attack context. This ransomware-aware prioritisation helps organisations focus on the issues that pose the greatest risk — not simply those with high CVSS scores.

Behavioural threat detection and anomaly analytics

AI is reshaping threat detection. Instead of relying solely on signature-based detection, behavioural models analyse user activity, network flows, and endpoint telemetry to spot outliers.

This approach is especially effective against:

- Insider threats

- Credential abuse

- Non-malware lateral movement

- Cloud misconfigurations

AI’s machine learning models build profiles of “normal” and alert on deviations in real time – dramatically improving detection accuracy and response quality.

Automated incident response

Once a threat is detected, AI can help orchestrate response workflows. Automated triage can sift through alerts, correlate related signals, and even suggest containment actions. In practice, this means faster containment with less analyst fatigue.

Several organisations report 30 % or greater reductions in mean time to respond (MTTR) for incidents involving AI-assisted workflows – a critical benefit when minutes make the difference.

Integration into DevSecOps and secure development

AI tools are now embedding into DevSecOps pipelines, scanning code for vulnerabilities as part of continuous integration and delivery (CI/CD). This shifts security left – detecting issues earlier and reducing the cost of fixes.

Unlike traditional scanners, AI doesn’t just check patterns – it can reason about business logic, chained weaknesses, and cross-module interactions.

Human centric security training and simulations

AI can generate custom phishing simulations, personalised security education, and adaptive threat training. Organisations using AI-enabled awareness training have seen phishing susceptibility drop by over 50 % among employees – a meaningful behavioural change.

Strategic governance: balancing innovation with control

Given the dual nature of AI, successful deployment requires thoughtful governance.

- Human-In-The-Loop oversight: AI should augment human decision-making, not replace it – especially for actions with high potential impact. By keeping humans involved in critical decisions, organisations preserve judgement and accountability.

- Technical guardrails and access controls: Governance is not just policy – it must be technical and enforceable. Role-based access, usage monitoring, response logging, and model versioning ensure AI capabilities are used responsibly and transparently.

- Continuous testing and model evaluation: Threat landscapes evolve rapidly. AI models must be continuously evaluated for drift, performance degradation, and susceptibility to manipulation. Red-teaming AI outputs and adversarial testing techniques help organisations identify weaknesses before attackers do.

- Cross-Functional collaboration: AI governance cannot be siloed in security teams alone. Collaboration with legal, compliance, risk, and development functions ensures alignment with business needs, regulatory requirements, and ethical considerations.

The broader cybersecurity context: trends, threats, and innovation

AI in cybersecurity does not exist in a vacuum. It intersects with wider industry forces:

Escalating AI-Driven threat frequency

A growing majority of security leaders view AI-driven attacks as their top concern. Across industries, automated phishing campaigns, AI-powered malware, and deepfake scams are on the rise, accounting for a growing portion of incident reports.

Nation-State and hybrid threat actors

State actors are adopting AI to scale espionage, misinformation, and intrusion campaigns. Reports show that hostile nations use AI tools to automate phishing and content manipulation at volumes far beyond manual operations.

This trend blurs the line between cybercrime and digital conflict, making AI governance a matter of national as well as organisational security.

Rapid market growth and regulatory pressure

The AI cybersecurity market continues to expand, with forecast growth into the tens of billions of dollars by the end of the decade. Mounting regulatory requirements — from EU data protection laws to emerging national AI safety standards — are pushing organisations to adopt both governance frameworks and transparency mechanisms.

Global collaboration and standardisation

International reports and AI safety coalitions are shaping global best practices. The latest International AI Safety Report involves contributions from experts across 30 countries, reflecting a shared desire to manage AI risks while enabling positive innovation.

Conclusion

AI’s integration into cybersecurity is inexorable. It has already transformed defensive capabilities – faster detection, deeper insight, and more efficient response. Yet it also introduces new offensive risks, expanded attack surfaces, and strategic challenges that long-standing security models never anticipated.

For organisations to thrive, AI cannot be an unregulated utility. It must be governed, monitored, and human-cantered – a strategic tool, not an autonomous authority. The future of cybersecurity will not be human versus AI, but human with AI, working together within boundaries of accountability, transparency, and foresight.

At CyberNX, our team of certified professionals and experienced experts power multiple cybersecurity services such as red teaming, pentesting, SOC, MDR and SBOM with AI, however, with sufficient guardrails. In case you want great clarity on the use of AI for cybersecurity initiatives or otherwise in your organisation, feel free to contact us today.

AI doubled-edged sword for business security FAQs

Can AI fully replace human security analysts?

No. AI amplifies capability but cannot replicate human judgement, ethics, and strategic decision-making – especially in high-impact incidents.

How can organisations mitigate AI-generated code risks?

Implement code review standards, enforce Software Bills of Materials (SBOMs), and integrate static and dynamic testing into pipelines.

Are AI-generated phishing attacks more effective than traditional ones?

Yes. AI can personalise scams at scale, making them harder to detect and increasing success rates compared to generic phishing.

Should organisations build their own AI cybersecurity tools or rely on vendors?

A hybrid approach often works best: leverage vendor innovations for core capabilities while tailoring internal governance and integration.