Generative AI has moved from pilot projects to production systems at striking speed. Among the most discussed innovations is Claude Code, developed by Anthropic.

For enterprises rethinking how software is built, reviewed, and secured, Claude Code signals more than convenience. It represents a shift toward AI-augmented development environments where human engineers collaborate with machine intelligence in real time.

For CISOs and IT leaders, the conversation quickly turns practical. Can we trust AI-generated code? How do we govern it? And most importantly, is it safe?

These are not alarmist questions. They are leadership questions.

Claude Code, explained

Claude Code is an AI-powered coding assistant that operates directly inside a developer’s terminal. Instead of manually writing functions or debugging complex logic, developers describe requirements in plain English. The system then writes, tests, fixes, and explains the code.

In a BFSI environment, for example, a developer building a loan eligibility engine could ask the tool to generate validation logic for credit thresholds. The AI drafts the code, suggests edge-case handling, and even creates unit tests. What once took days can take hours.

For a retail enterprise modernising its payment platform, this could mean quicker API integrations. For a healthcare provider, faster updates to patient management systems.

The attraction is clear. Faster time to market. Reduced backlog. Greater developer productivity without proportional hiring.

Yet enterprise software governance cannot focus only on speed. It must balance velocity with visibility and control.

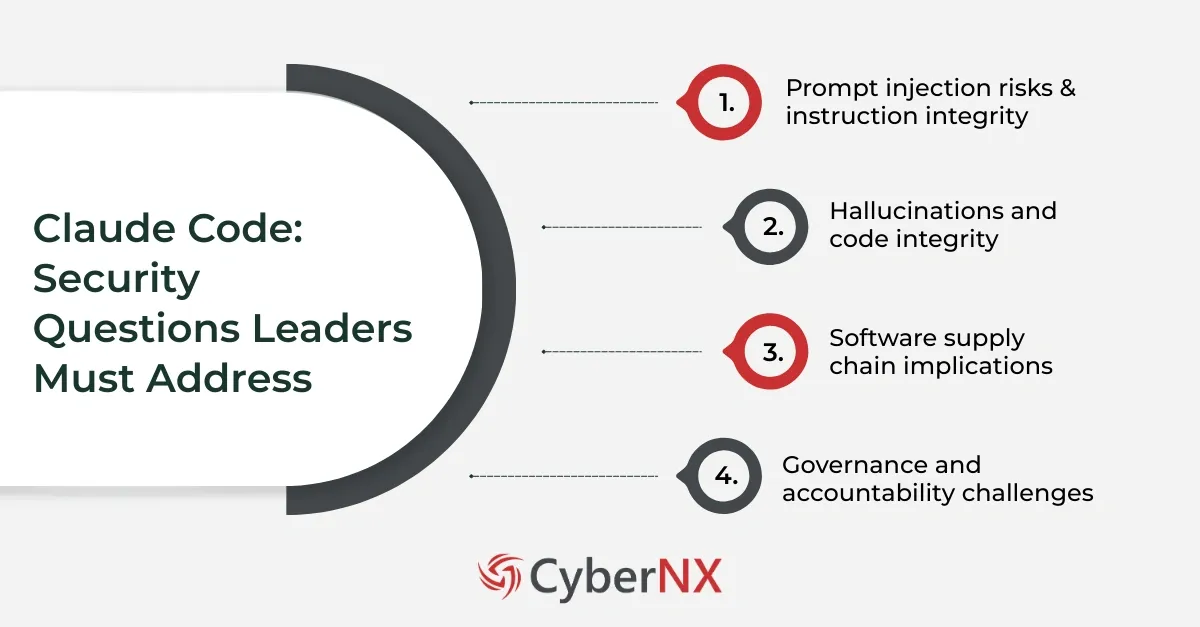

Security questions that matter and leaders must address

AI-assisted development changes how code is created. That shift alters the risk landscape. Let us explore the key governance concerns.

1. Prompt injection risks & instruction integrity

AI coding tools rely on prompts. They may pull contextual information from internal repositories, documentation, or external sources.

Prompt injection attacks manipulate these inputs. If a malicious instruction is embedded within a documentation file or third-party repository, the AI could generate compromised code. In extreme cases, it could expose sensitive logic or secrets.

Consider a scenario where a developer unknowingly references an external code snippet containing hidden instructions. The AI processes that content and injects insecure authentication logic into a production system.

This expands the security perimeter. The risk moves beyond infrastructure and into instruction integrity. Traditional secure coding checklists were not designed for this dynamic interaction model.

Enterprises must therefore implement controlled data ingestion policies and restrict AI tools from processing untrusted sources without validation.

2. Hallucinations and code integrity

Large language models can generate plausible but incorrect outputs. In software development, this may include:

- Incorrect API calls

- Non-existent libraries

- Weak encryption implementations

- Insecure session management patterns

The issue is not that the AI intentionally creates vulnerabilities. The issue is scale.

If a development team produces five times more code due to AI assistance, security review processes must expand accordingly. Static application security testing and peer reviews must remain mandatory.

For example, an AI tool may generate cryptographic functions that appear valid but use outdated algorithms. Without rigorous review, such flaws can slip into production.

The risk lies in over-reliance. Human validation remains essential.

3. Software supply chain implications

AI-assisted coding introduces new dimensions to software supply chain security.

If generated code incorporates open-source packages, how are licences tracked? If the tool recommends dependencies, how are vulnerabilities monitored?

Recent supply chain attacks have shown that even trusted libraries can become entry points. AI does not eliminate this exposure. It reshapes it.

Imagine a fintech firm using AI to accelerate microservices development. The tool suggests a third-party payment library. Months later, a vulnerability is disclosed in that dependency. Without continuous monitoring and SBOM discipline, the risk remains invisible.

Enterprises must strengthen Software Bill of Materials practices, automate dependency scanning, and integrate security validation into CI pipelines.

4. Governance and accountability challenges

AI-assisted development raises a simple yet complex question. Who is accountable?

If a vulnerability emerges from AI-generated code, responsibility cannot be outsourced to the tool provider. Governance frameworks must clearly define ownership.

Boards are increasingly asking about AI oversight, model risk management, and compliance exposure. Regulatory bodies are also exploring AI accountability standards.

Enterprises should therefore embed AI coding tools within structured governance frameworks that include:

- Role-based access controls

- Comprehensive logging

- Audit trails

- Mandatory code review checkpoints

- Model usage policies

Without governance, productivity gains may outpace risk visibility.

AI as both risk and remedy

The conversation becomes more nuanced here. The same AI capabilities that introduce new risks can also strengthen enterprise software governance.

AI-driven static and dynamic code analysis can detect insecure patterns early. Automated compliance validation can flag deviations from policy. Context-aware threat modelling can help development teams anticipate abuse cases before release.

For example, an organisation could use AI not only to generate code but also to scan repositories continuously for insecure authentication flows. Another enterprise might deploy AI to cross-reference code against internal security baselines in real time.

When implemented thoughtfully, AI becomes part of the defence architecture.

The question is not whether to use AI coding tools. The question is how to integrate them within a disciplined governance model.

Building a responsible AI-Augmented development environment

Enterprises exploring Claude Code should adopt a phased approach.

First, define clear usage boundaries. Not every system should be AI-assisted initially. Start with lower-risk applications and measure outcomes.

Second, align DevSecOps pipelines with AI output volume. If productivity doubles, validation must scale proportionally.

Third, implement continuous monitoring for AI-suggested dependencies and external integrations.

Fourth, invest in developer training. Teams must understand both the strengths and limitations of AI coding assistants.

Our experience shows that governance frameworks do not slow innovation. They enable sustainable growth. When security and development leaders collaborate early, AI adoption becomes controlled rather than chaotic.

The strategic outlook for enterprise leaders

Claude Code reflects a broader transformation in enterprise software governance. AI is embedding itself into core development workflows.

Organisations that resist this shift risk slower innovation. Yet those who adopt it blindly may expose themselves to avoidable threats. The winning approach combines experimentation with structure. Clear oversight. Continuous validation. Transparent accountability. Enterprise software governance must evolve alongside AI capabilities. That evolution requires foresight, policy alignment, and measurable controls.

Claude Code is not simply a productivity tool but a governance conversation.

Conclusion

Claude Code marks an important step in AI-augmented development. It can accelerate delivery, improve developer efficiency, and enhance competitiveness. However, enterprise software governance must mature in parallel. Prompt injection risks, hallucination errors, supply chain exposure, and accountability gaps demand structured oversight.

At CyberNX, we work alongside enterprise leaders to design AI governance frameworks that protect innovation while managing risk. If your organisation is evaluating AI coding tools, let us help you build the controls that make adoption both secure and sustainable.

Connect with our experts today for a strategic consultation and strengthen your enterprise software governance for the AI era.

Claude Code and enterprise software governance FAQs

1. Can Claude Code access confidential enterprise data?

Access depends on deployment configuration. Enterprises must define strict access controls, restrict data ingestion sources, and ensure sensitive repositories are not exposed without governance safeguards.

2. Does AI-generated code meet regulatory compliance standards?

AI-generated code does not automatically meet compliance requirements. Organisations must validate it against internal policies and industry standards such as financial or healthcare regulations.

3. How should CISOs measure the risk of AI coding tools?

CISOs should assess data exposure risk, supply chain impact, dependency management maturity, logging capabilities, and alignment with existing DevSecOps controls.

4. Can AI coding tools replace secure code reviews?

No. AI can assist with code analysis, but human oversight and formal security testing remain critical for enterprise-grade assurance.